JSAIT's first special issue will focus on the mathematical foundations of deep learning as well as applications across information science.

JSAIT's first special issue will focus on the mathematical foundations of deep learning as well as applications across information science.

Welcome to the first issue of the Journal on Selected Areas in Information Theory (JSAIT) focusing on Deep Learning: Mathematical Foundations and Applications to Information Science.

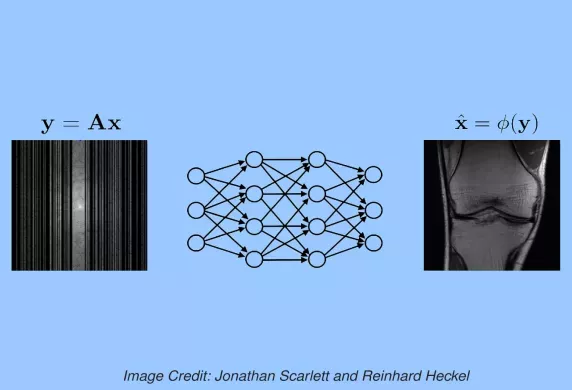

Reliable digital communication is a primary workhorse of the modern information age. The disciplines of communication, coding, and information theories drive the innovation by designing efficient codes that allow transmissions to be robustly and efficiently decoded. Progress in near optimal codes is made by individual human ingenuity over the decades, and breakthroughs have been, befittingly, sporadic and spread over several decades. Deep learning is a part of daily life where its successes can be attributed to a lack of a (mathematical) generative model. Deep learning empirically fits neural network models to the data, and the result has been extremely potent. In yet other applications, the data is generated by a simple model and performance criterion mathematically precise and training/test samples infinitely abundant, but the space of algorithmic choices is enormous (example: chess). Deep learning has recently shown strong promise in these problems too (example: alphazero). The latter scenario is a good description of communication theory. The mathematical models underlying canonical communication channels allow one to sample an unlimited amount of data to train and test the communication algorithms ((encoder, decoder) pairs) and the metric of bit (or block) error rate allows for mathematically precise evaluation. Motivated by the successes of deep learning in mathematically well defined and extremely challenging tasks of chess, Go, and protein folding, we posit that deep learning methods can play a crucial role in solving core goals of coding theory: designing new (encoder, decoder) pairs that improve state of the art performance over canonical channel models. This manuscript surveys recent advances towards demonstrating this hypothesis, by focusing on strengthening a specific family of coding methods - sequential codes (convolutional codes) and codes that use them as basic building blocks (Turbo codes) - via deep learning methods. New state of the art results are derived on several canonical channels, including the AWGN channel with feedback.

Inference capabilities of machine learning (ML) systems skyrocketed in recent years, now playing a pivotal role in various aspect of society. The goal in statistical learning is to use data to obtain simple algorithms for predicting a random variable Y from a correlated observation X. Since the dimension of X is typically huge, computationally feasible solutions should summarize it into a lower-dimensional feature vector T, from which Y is predicted. The algorithm will successfully make the prediction if T is a good proxy of Y, despite the said dimensionality-reduction. A myriad of ML algorithms (mostly employing deep learning (DL)) for finding such representations T based on real-world data are now available. While these methods are effective in practice, their success is hindered by the lack of a comprehensive theory to explain it. The information bottleneck (IB) theory recently emerged as a bold information-theoretic paradigm for analyzing DL systems. Adopting mutual information as the figure of merit, it suggests that the best representation T should be maximally informative about Y while minimizing the mutual information with X. In this tutorial we survey the information-theoretic origins of this abstract principle, and its recent impact on DL. For the latter, we cover implications of the IB problem on DL theory, as well as practical algorithms inspired by it. Our goal is to provide a unified and cohesive description. A clear view of current knowledge is important for further leveraging IB and other information-theoretic ideas to study DL models.